The Role of an AI Ethicist in the Age of Artificial Intelligence

As artificial intelligence (AI) continues to evolve and integrate into various aspects of our daily lives, the role of an AI ethicist has become increasingly vital. These professionals are tasked with ensuring that AI technologies are developed and deployed in ways that are ethical, fair, and beneficial to society as a whole.

What is an AI Ethicist?

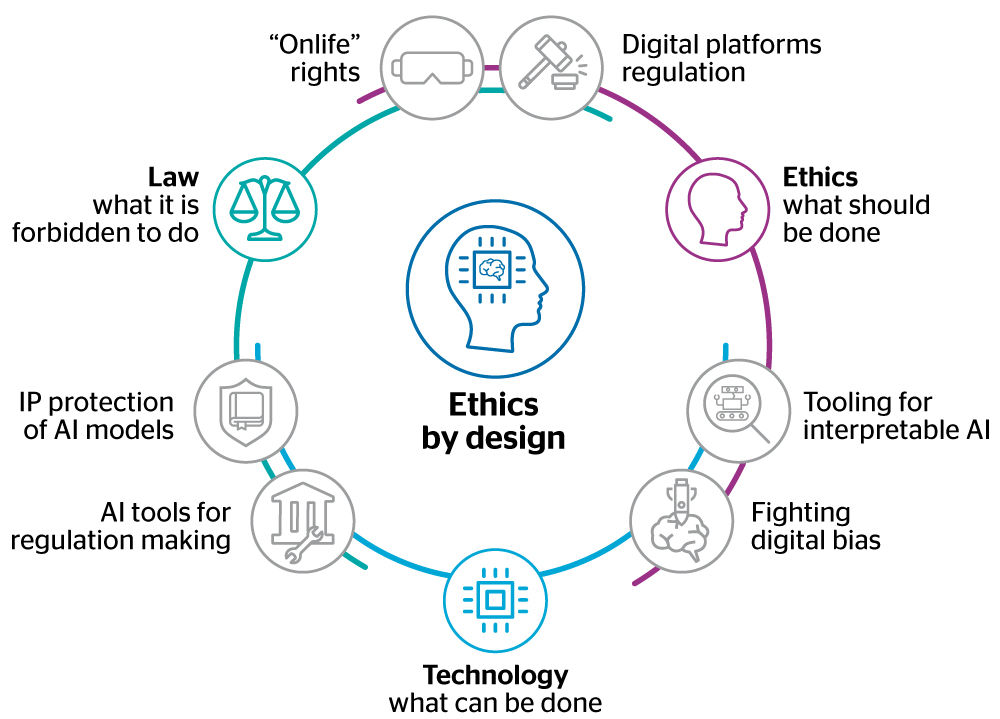

An AI ethicist is a specialist who examines the moral implications of AI technologies. They work at the intersection of technology, philosophy, and law to address issues such as privacy, bias, accountability, and transparency in AI systems. Their primary goal is to ensure that AI applications align with ethical standards and societal values.

Key Responsibilities

- Identifying Ethical Risks: AI ethicists analyze potential risks associated with AI systems, including biases in algorithms that could lead to unfair treatment or discrimination.

- Developing Ethical Guidelines: They contribute to creating frameworks and guidelines that govern the ethical use of AI technologies.

- Advising Stakeholders: These experts provide guidance to developers, companies, policymakers, and other stakeholders on how to implement ethical practices in their AI projects.

- Promoting Transparency: Ensuring that AI systems are transparent and explainable is a critical aspect of their role. This helps build trust among users and stakeholders.

The Importance of Ethics in AI

The rapid advancement of AI technology brings both opportunities and challenges. While it has the potential to revolutionize industries such as healthcare, finance, and transportation, it also raises concerns about privacy violations, job displacement, and decision-making biases. An ethicist’s involvement is crucial for addressing these concerns proactively.

A key issue is algorithmic bias—when an AI system inadvertently perpetuates existing prejudices found in its training data. An ethicist works to identify these biases early on and collaborates with developers to mitigate them. This ensures more equitable outcomes for all users.

The Future of AI Ethics

As technology continues to advance at a rapid pace, the demand for skilled AI ethicists will likely grow. Organizations across various sectors will increasingly recognize the importance of incorporating ethical considerations into their technological strategies.

The future will see more interdisciplinary collaboration between technologists, ethicists, legal experts, and policymakers. This cooperation will be essential in crafting regulations that keep pace with technological innovation while safeguarding human rights and societal values.

Conclusion

The role of an AI ethicist is indispensable in navigating the complex landscape of artificial intelligence. By ensuring that ethical principles guide technological development and deployment, these professionals help create a future where technology serves humanity positively and equitably.

Five Key Benefits of AI Ethicists: Promoting Fairness, Responsibility, and Trust in AI Technologies

- AI ethicists help identify and mitigate biases in AI algorithms, promoting fairness and equity.

- They contribute to the development of ethical guidelines that govern the responsible use of AI technologies.

- AI ethicists advise stakeholders on best practices for implementing ethical standards in AI projects.

- Their work promotes transparency in AI systems, building trust among users and society.

- They play a crucial role in addressing moral implications of AI technologies, ensuring alignment with societal values.

Challenges Faced by AI Ethicists: Conflicting Viewpoints, Lack of Enforcement, Marginalization, and Rapid Technological Change

- Potential for conflicting ethical viewpoints among AI ethicists, leading to challenges in consensus-building.

- Limited enforcement mechanisms for ensuring adherence to ethical guidelines developed by AI ethicists.

- Risk of AI ethicists being marginalized or overlooked in decision-making processes within organizations.

- Difficulty in addressing the rapidly evolving nature of AI technology, requiring constant adaptation of ethical frameworks.

AI ethicists help identify and mitigate biases in AI algorithms, promoting fairness and equity.

AI ethicists play a crucial role in identifying and mitigating biases within AI algorithms, which is essential for promoting fairness and equity in technological applications. By scrutinizing the data sets used to train AI systems, they can uncover embedded prejudices that might lead to discriminatory outcomes. These biases can arise from various sources, such as historical data that reflects societal inequities or flawed data collection processes. AI ethicists work closely with developers to adjust algorithms and implement corrective measures, ensuring that AI technologies operate impartially across different demographics. This proactive approach not only enhances the reliability and accuracy of AI systems but also fosters public trust by demonstrating a commitment to ethical standards and social justice in technology.

They contribute to the development of ethical guidelines that govern the responsible use of AI technologies.

AI ethicists play a crucial role in shaping the future of technology by contributing to the development of ethical guidelines that govern the responsible use of AI technologies. These guidelines serve as a foundational framework for developers, companies, and policymakers, ensuring that AI systems are designed and implemented with consideration for human rights, fairness, and societal impact. By establishing clear standards and best practices, AI ethicists help prevent potential abuses and unintended consequences of AI deployment. Their work promotes accountability and transparency, fostering public trust in AI innovations while encouraging innovation that aligns with ethical principles. This proactive approach not only mitigates risks but also maximizes the positive potential of AI to benefit society as a whole.

AI ethicists advise stakeholders on best practices for implementing ethical standards in AI projects.

AI ethicists play a crucial role in guiding stakeholders on best practices for implementing ethical standards in AI projects. By offering expert advice, they help ensure that AI technologies are developed and deployed responsibly, minimizing potential risks and maximizing benefits. These professionals work closely with developers, companies, and policymakers to identify ethical concerns early in the project lifecycle. They provide insights on issues such as data privacy, algorithmic bias, and transparency, helping stakeholders create AI systems that align with societal values and legal requirements. Through their guidance, AI ethicists contribute to building trust in AI technologies and fostering public confidence in their use across various sectors.

Their work promotes transparency in AI systems, building trust among users and society.

AI ethicists play a crucial role in promoting transparency within AI systems, which is essential for building trust among users and society at large. By advocating for clear and understandable AI processes, they help ensure that these systems operate in ways that are open and accountable. This transparency allows users to see how decisions are made, understand the data being used, and recognize the limitations of the technology. As a result, individuals and organizations can feel more confident in relying on AI tools, knowing that there is oversight aimed at preventing misuse or unintended consequences. Ultimately, the work of AI ethicists fosters a sense of security and trustworthiness that encourages broader acceptance and responsible use of AI innovations.

They play a crucial role in addressing moral implications of AI technologies, ensuring alignment with societal values.

AI ethicists play a crucial role in navigating the moral implications of AI technologies, ensuring that these innovations align with societal values. As AI systems become more integrated into daily life, they bring with them complex ethical challenges that require careful consideration. AI ethicists work diligently to identify and address potential issues such as bias, privacy concerns, and the impact on employment. By doing so, they help create guidelines and frameworks that promote fairness, transparency, and accountability. Their efforts ensure that AI technologies not only advance technological capabilities but also respect and uphold the ethical standards and values cherished by society. This alignment is essential for fostering public trust in AI systems and ensuring their benefits are distributed equitably across all segments of society.

Potential for conflicting ethical viewpoints among AI ethicists, leading to challenges in consensus-building.

The field of AI ethics is inherently complex, and one significant challenge is the potential for conflicting ethical viewpoints among AI ethicists. As these professionals come from diverse backgrounds—ranging from philosophy and law to technology and sociology—they often bring different perspectives on what constitutes ethical AI practices. This diversity can lead to disagreements on key issues, such as data privacy, algorithmic fairness, and the balance between innovation and regulation. Such conflicts can make it difficult to reach a consensus on ethical guidelines or standards, potentially slowing down the implementation of consistent and effective ethical frameworks across industries. As a result, organizations may struggle to navigate these differences while striving to develop AI technologies that align with varied ethical considerations.

Limited enforcement mechanisms for ensuring adherence to ethical guidelines developed by AI ethicists.

AI ethicists face a significant challenge in the form of limited enforcement mechanisms to ensure adherence to the ethical guidelines they develop. While these professionals can identify potential ethical issues and propose comprehensive frameworks, their recommendations often lack the binding power needed to compel organizations to comply. This gap between ethical guidance and practical implementation can result in companies prioritizing innovation and profitability over ethical considerations. Without robust regulatory frameworks or industry-wide standards that mandate adherence, AI systems may continue to operate without fully addressing concerns such as bias, privacy, and accountability. Consequently, the effectiveness of AI ethicists is often contingent upon voluntary compliance by organizations, which can vary widely depending on corporate culture and priorities.

Risk of AI ethicists being marginalized or overlooked in decision-making processes within organizations.

In the rapidly evolving landscape of artificial intelligence, one significant challenge is the risk of AI ethicists being marginalized or overlooked in organizational decision-making processes. Despite their crucial role in identifying and addressing ethical concerns, AI ethicists often struggle to have their voices heard amidst the pressure to innovate and deploy new technologies quickly. This marginalization can lead to ethical considerations being sidelined, resulting in AI systems that may inadvertently perpetuate biases or infringe on privacy rights. When organizations prioritize speed and profitability over ethical deliberation, they risk developing technologies that could harm individuals and society at large. Ensuring that AI ethicists are integrated into decision-making processes is essential for creating responsible and equitable AI solutions.

Difficulty in addressing the rapidly evolving nature of AI technology, requiring constant adaptation of ethical frameworks.

The rapidly evolving nature of AI technology presents a significant challenge for AI ethicists, as it demands the continuous adaptation and updating of ethical frameworks. As AI systems become more sophisticated and integrated into various sectors, new ethical dilemmas and unforeseen consequences frequently arise. This dynamic environment requires ethicists to not only keep pace with technological advancements but also anticipate potential future issues. The constant need for adaptation can strain resources and make it difficult to establish stable guidelines that remain relevant over time. Consequently, AI ethicists must engage in ongoing research, collaboration, and dialogue with technologists to ensure that ethical standards are both current and effective in addressing the complexities of emerging AI technologies.